Visualize your services' k8s runtime

Port’s Kubernetes integration helps you model and visualize your cluster’s workloads alongside your existing workloads in Port. This guide will help you set up the integration and visualize your services' Kubernetes runtime.

Common use cases

- Developers can easily view the health and status of their services' K8s runtime.

- Platform engineers can create custom views and dashboards for different stakeholders.

- Platform engineers can set, maintain, and track standards for Kubernetes resources.

- R&D managers can track data about services' Kubernetes resources, enabling high-level oversight and better decision-making.

Prerequisites

- This guide assumes you have a Port account and that you have finished the onboarding process. We will use the

Workloadblueprint that was created during the onboarding process. - You will need an accessible k8s cluster. If you don't have one, here is how to quickly set-up a minikube cluster.

- Helm - required to install Port's Kubernetes exporter.

Set up data model

To visualize your cluster's workloads in Port, we will first install Port’s Kubernetes exporter, which automatically creates Kubernetes-related blueprints and entities in your portal.

Install Port's Kubernetes exporter

To install the integration using Helm:

-

Go to the Kubernetes data source page in your portal.

-

A

helmcommand will be displayed, with default values already filled out (e.g. your Port client ID, client secret, etc).

Copy the command, replace the placeholders with your values, then run it in your terminal to install the integration.

The port_region, port.baseUrl, portBaseUrl, port_base_url and OCEAN__PORT__BASE_URL parameters select which Port API instance to use:

- EU (app.port.io) →

https://api.port.io - US (app.us.port.io) →

https://api.us.port.io

What does the exporter do?

After installation, the exporter will:

-

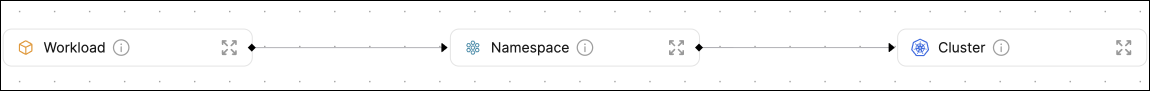

Create blueprints in your Builder (as defined here) that represent Kubernetes resources:

What is K8sWorkload?K8sWorkloadis an abstraction of Kubernetes objects which create and manage pods (e.g.Deployment,StatefulSet,DaemonSet).

-

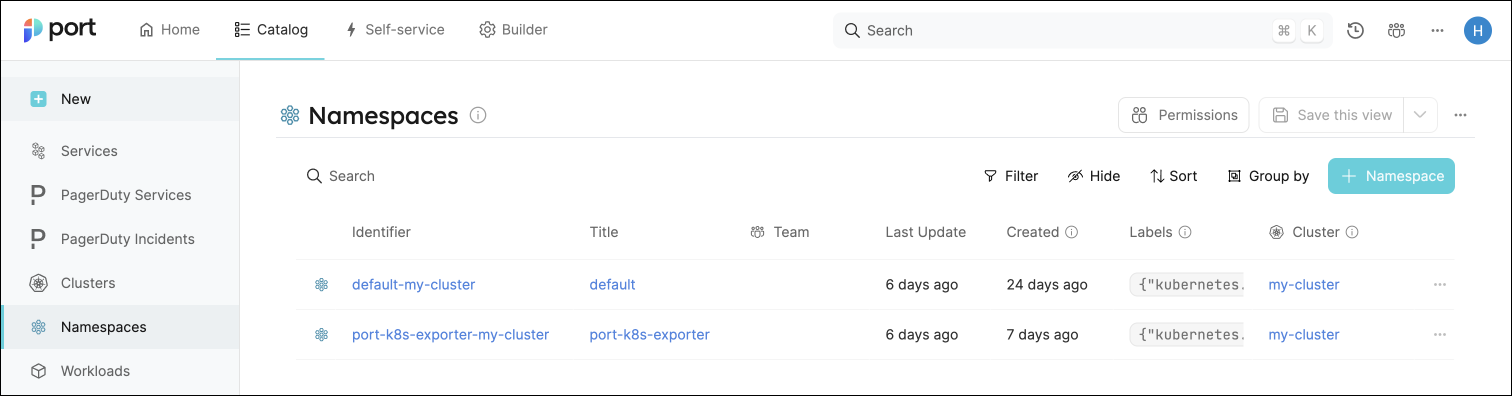

Create entities in your Software catalog. You will see a new page for each blueprint containing your resources, filled with data from your Kubernetes cluster (according to the default mapping that is defined here):

-

Create scorecards for the blueprints that represent your K8s resources (as defined here). These scorecards define rules and checks over the data ingested from your K8s cluster, making it easy to check that your K8s resources meet your standards.

-

Create dashboards that provide you with a visual view of the data ingested from your K8s cluster.

-

Listen to changes in your Kubernetes cluster and update your entities accordingly.

Set up automatic discovery

After installing the integration, the relationship between the Workload blueprint and the k8_workload blueprint is established automatically. To ensure each Workload entity is properly related to its respective k8_workload entity, we will configure automatic discovery using labels.

In this guide we will use the following convention:

A k8_workload with a label in the form of portWorkload: <workload-identifier> will automatically be assigned to a Workload with that identifier.

For example, a k8s deployment with the label portWorkload: myWorkload will be assigned to a Workload with the identifier myWorkload.

We achieved this by adding a mapping definition in the configuration YAML we used when installing the exporter. The definition uses jq to perform calculations between properties.

Let's see this in action:

-

Create a

Deploymentresource in your cluster with a label matching the identifier of aWorkloadin your Software catalog.

You can use the simple example below and change themetadata.labels.portWorkloadvalue to match your desiredWorkload. Copy it into a file nameddeployment.yaml, then apply it:kubectl apply -f deployment.yamlDeployment example (Click to expand)

apiVersion: apps/v1

kind: Deployment

metadata:

name: awesomeapp

labels:

app: nginx

portWorkload: AwesomeWorkload

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.14.2

resources:

limits:

cpu: "200m"

memory: "256Mi"

requests:

cpu: "100m"

memory: "128Mi"

ports:

- containerPort: 80

-

To see the new data, we need to update the mapping configuration that the K8s exporter uses to ingest data.

To edit the mapping, go to your data sources page, find the K8s exporter card, click on it and you will see a YAML editor showing the current configuration.Add the following block to the mapping configuration and click

Resync:resources:

# ... Other resource mappings installed by the K8s exporter

- kind: apps/v1/deployments

selector:

query: .metadata.namespace | startswith("kube") | not

port:

entity:

mappings:

- identifier: .metadata.labels.portWorkload

title: .metadata.name

blueprint: '"workload"'

relations:

k8s_workload: >-

.metadata.name + "-Deployment-" + .metadata.namespace + "-" +

env.CLUSTER_NAME

-

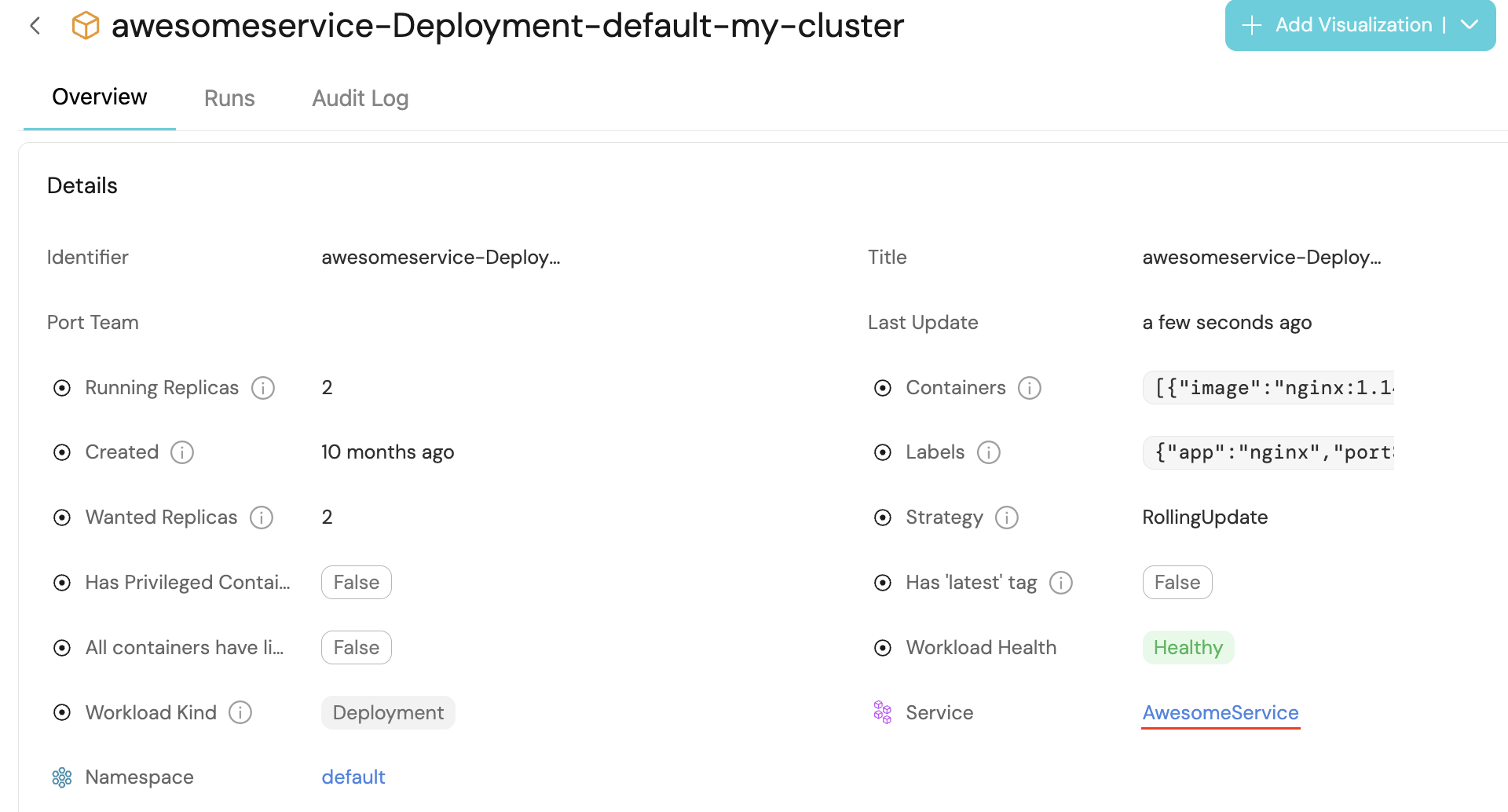

Go to your Software catalog, and click on

Workloads. Click on theWorkloadfor which you created the deployment, and you should see thek8_workloadrelation filled.

Visualize data from your Kubernetes environment

We now have a lot of data about our workloads, and some metrics to track their quality. Let's see how we can visualize this information in ways that will benefit the routine of our developers and managers. Let's start by creating a few widgets that will help us keep track of our services' health and availability.

Add an "Unhealthy services" table to your homepage

In the configuration provided for this guide, a workload is considered Healthy if its defined number of replicas is equal to its available replicas (of course, you can change this definition).

-

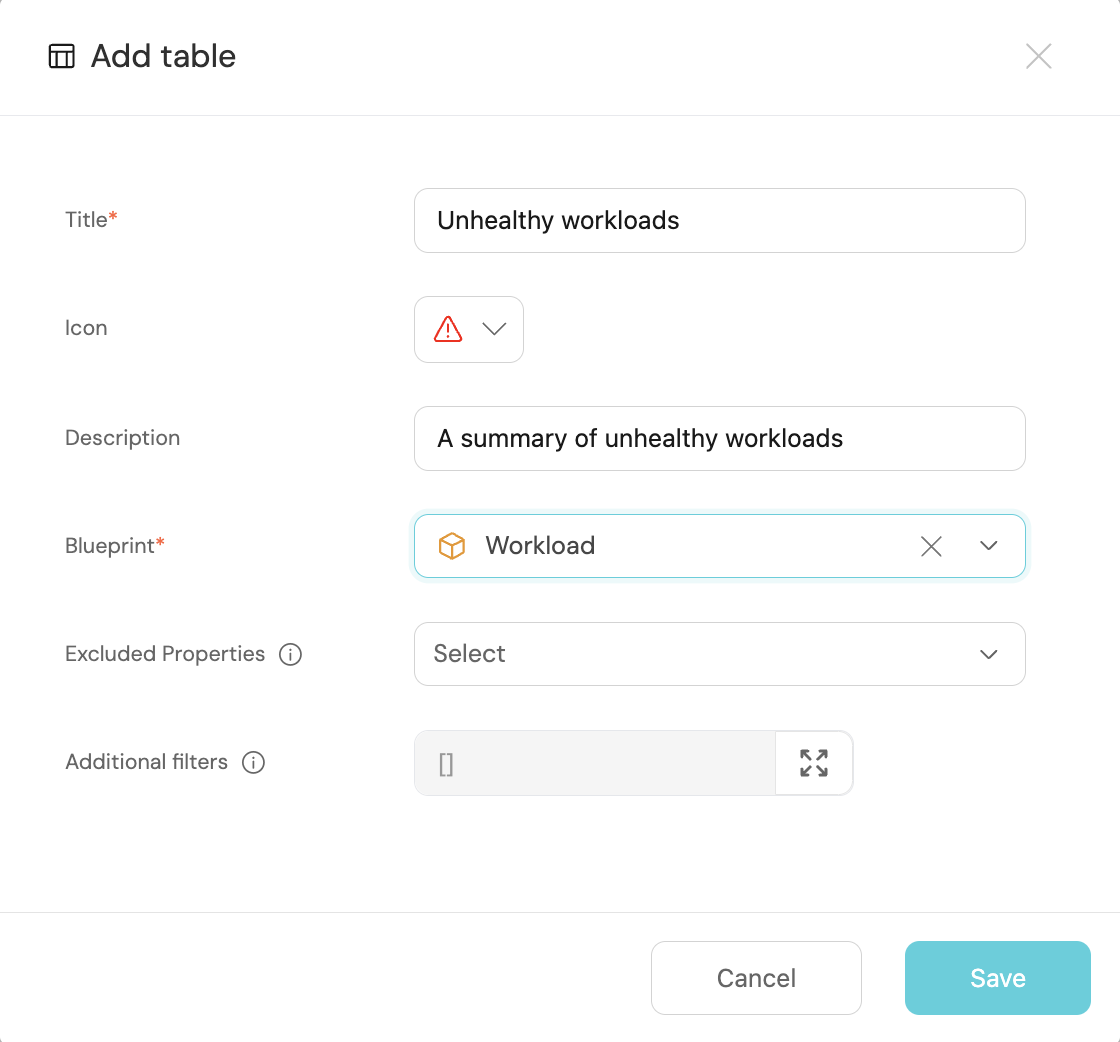

Go to your homepage, click on the

+ Widgetbutton in the top right corner, then selectTable. -

Fill the form out like this, then click

Save:

-

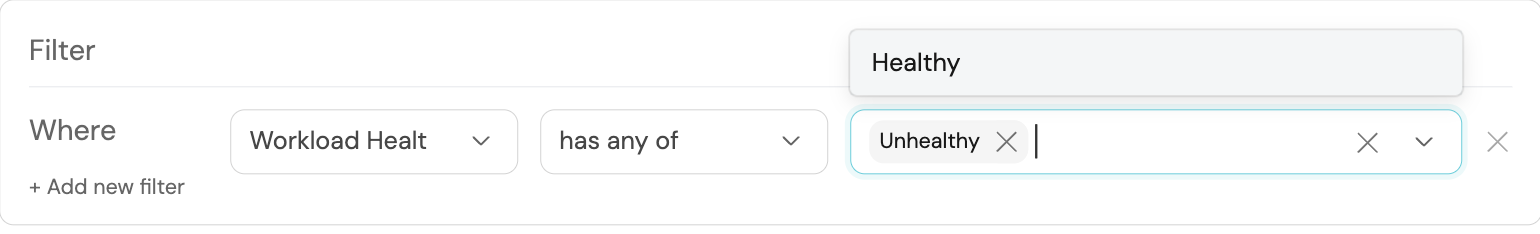

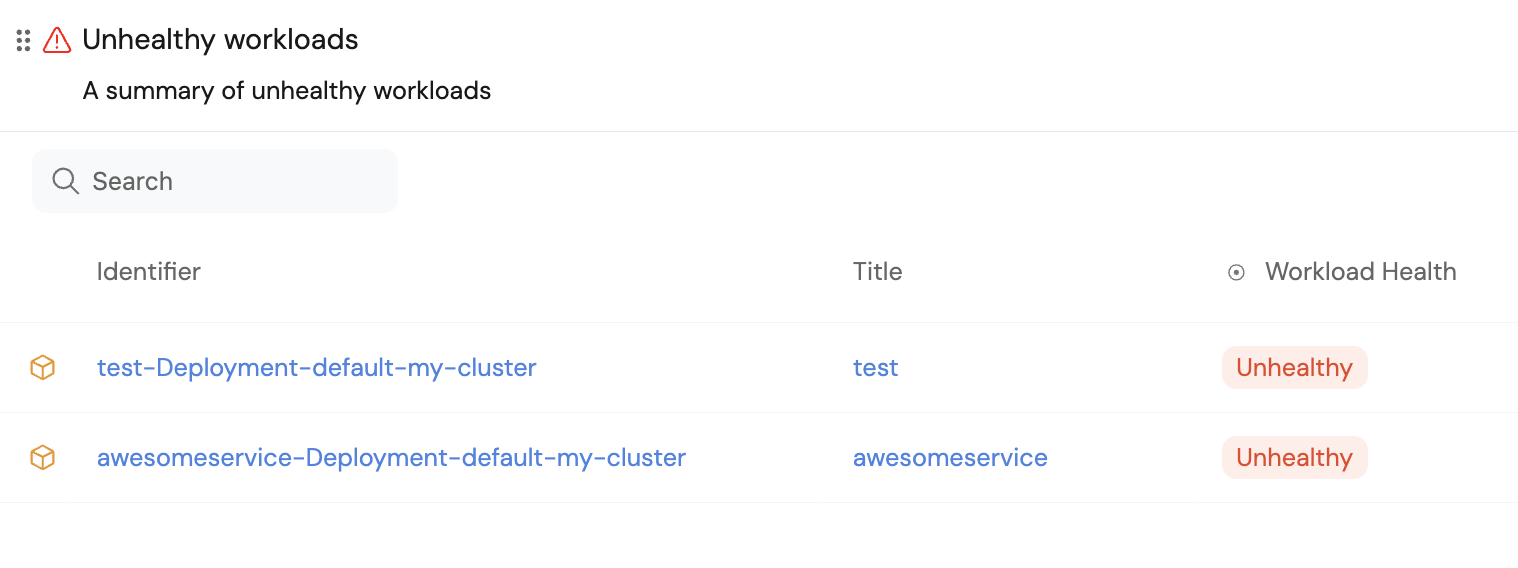

In your new table, click on

Filter, then on+ Add new filter. Fill out the fields like this:

Now you can keep track of services that need your attention right from your homepage.

These services were not included in this guide, but serve to show an example of how this table might look.

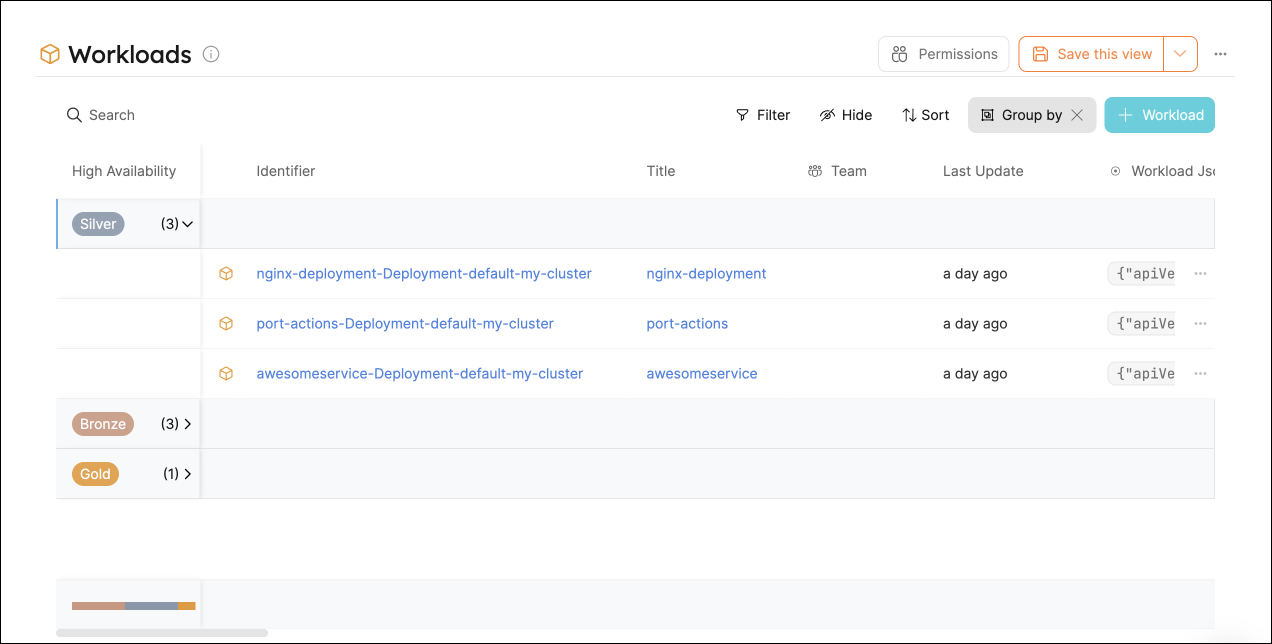

Use your scorecards to get a clear overview of your workloads' availability

In the configuration provided for this guide, the availability metric is defined like this:

- Bronze: >=1 replica

- Silver: >=2 replicas

- Gold: >=3 replicas

To get an overall picture of our workloads' availability, we can use a table operation.

-

Go to the

Workloadscatalog page. -

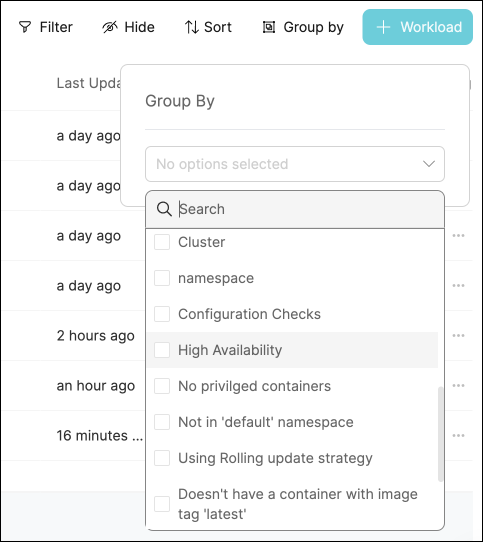

Click on the

Group bybutton, then chooseHigh availabilityfrom the dropdown:

-

Click on any of the metric levels to see the corresponding workloads:

Note that you can also set this as the default view by click on the Save this view button 📝

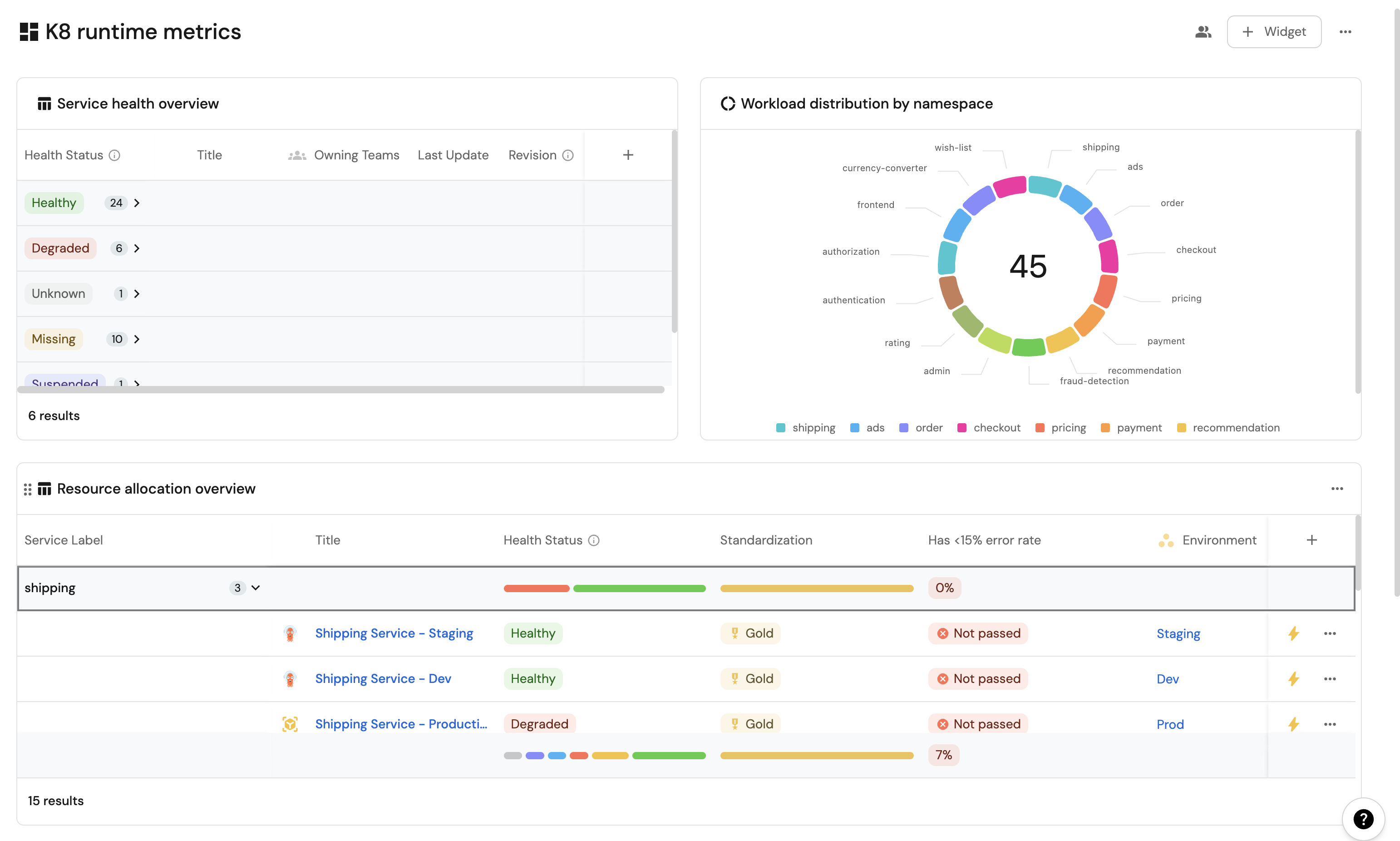

Visualization

By leveraging Port's dashboards, you can create custom views to track your Kubernetes runtime metrics and monitor your services' performance over time.

Dashboard setup

-

Go to your software catalog.

-

Click on the

+ Newbutton in the left sidebar. -

Select New dashboard.

-

Name the dashboard K8s Runtime Metrics.

-

Choose an icon (optional).

-

Click

Create.

Add widgets

In your new dashboard, create the following widgets:

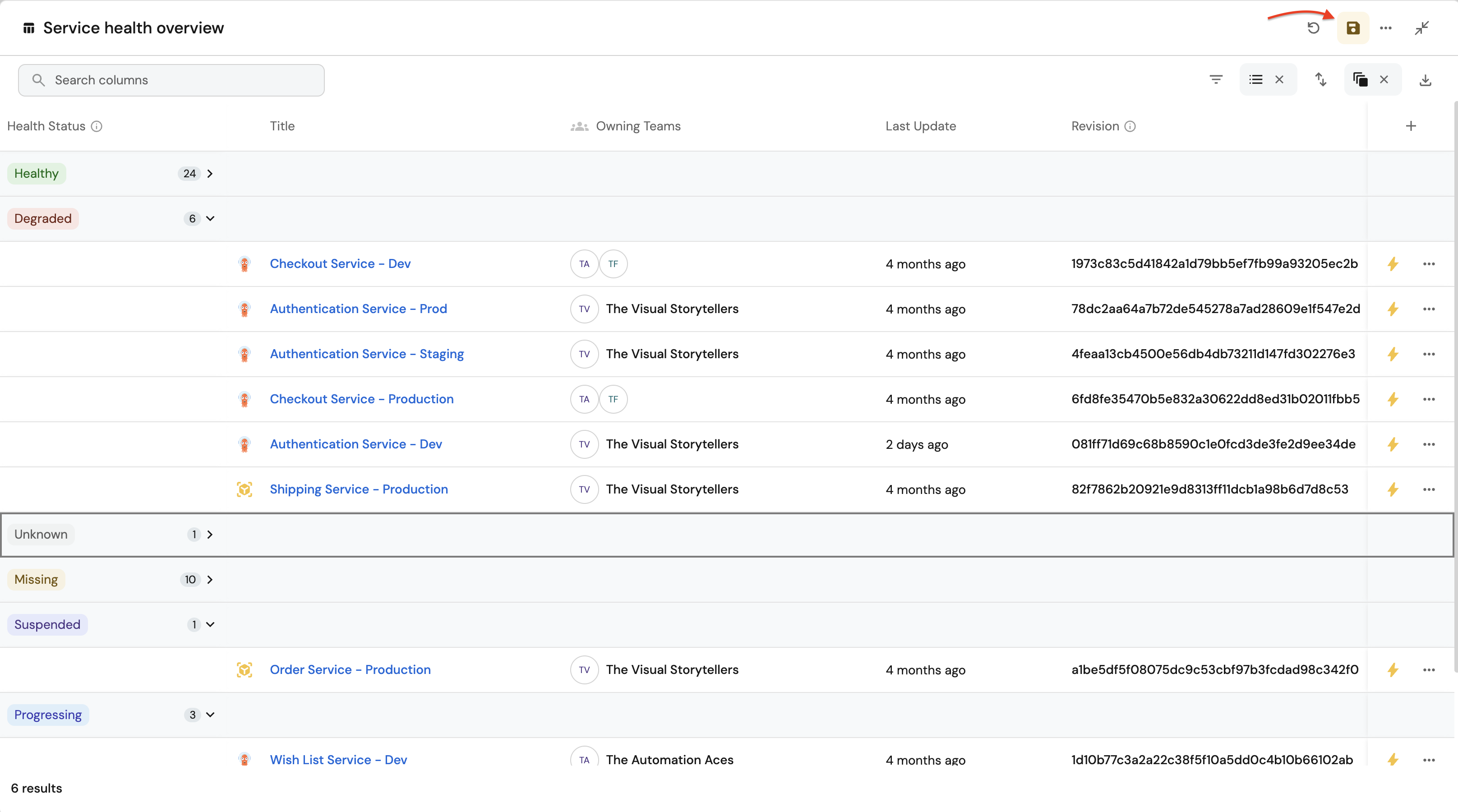

Service health overview (click to expand)

-

Click

+ Widgetand select Table. -

Type

Service health overviewin the Title field. -

Choose an icon (optional).

-

Choose Workload as the Blueprint.

-

Click on

Save. -

Click on the

...on the widget and selectCustomize table. -

Click on the

Group by any Columnicon and select Health Status. -

Click on

Manage propertiesand add the following:- Title

- Owning Team

- Revision

- Last Update

-

Click on the

Saveicon.

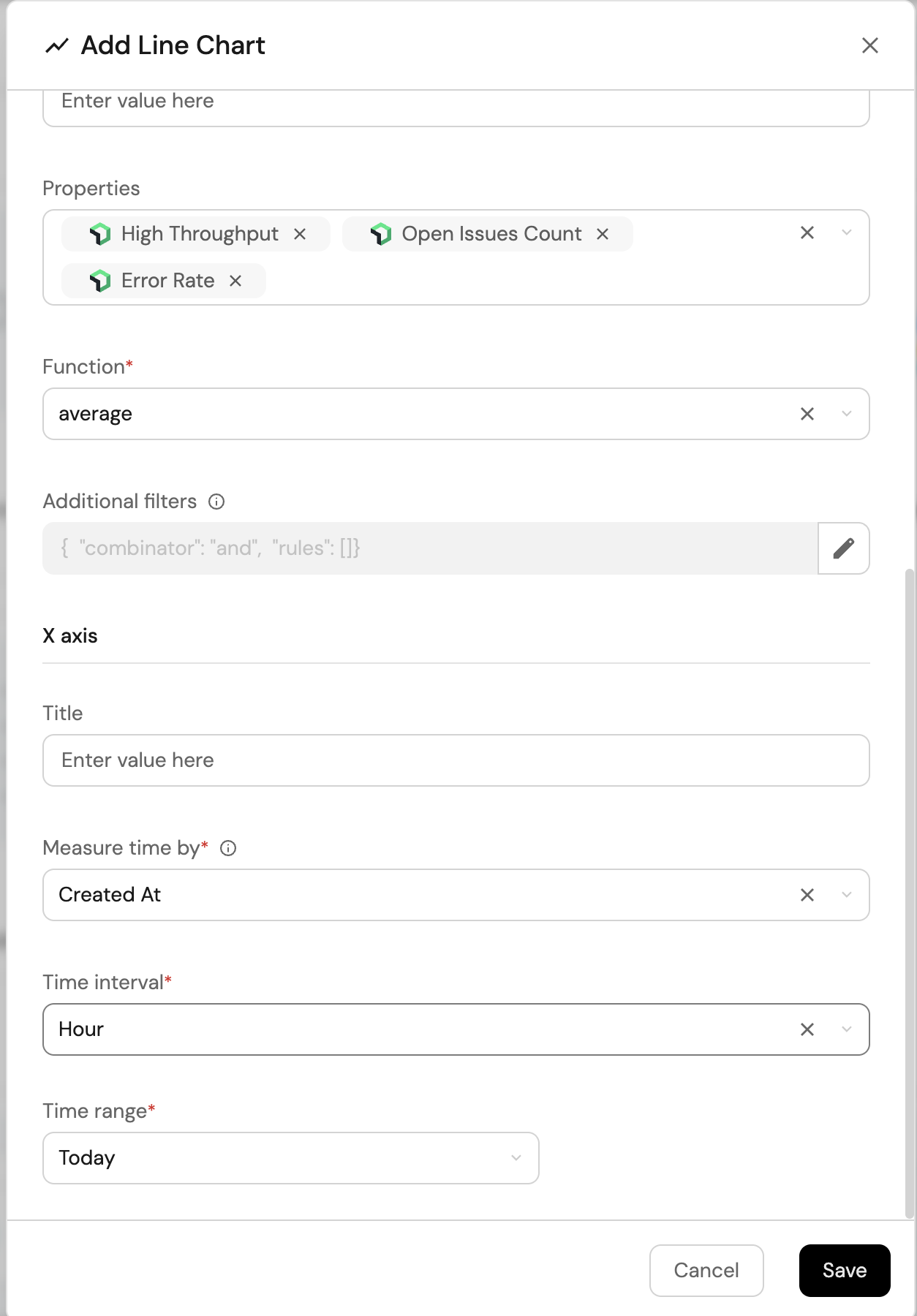

Resource usage trends (click to expand)

-

Click

+ Widgetand select Line Chart. -

Type

Resource usage trendsin the Title field. -

Choose an icon (optional).

-

Choose

Aggregate Property (All Entities)as the Chart type. -

Choose Workload as the Blueprint.

-

Choose

High Throughput,Open Issues Count,Error Rateas the Y axis Property. -

Choose

createdAtas the X axis Property. -

Select

Hourfor Time interval. -

Select

Todayfor Time range. -

Click on

Save

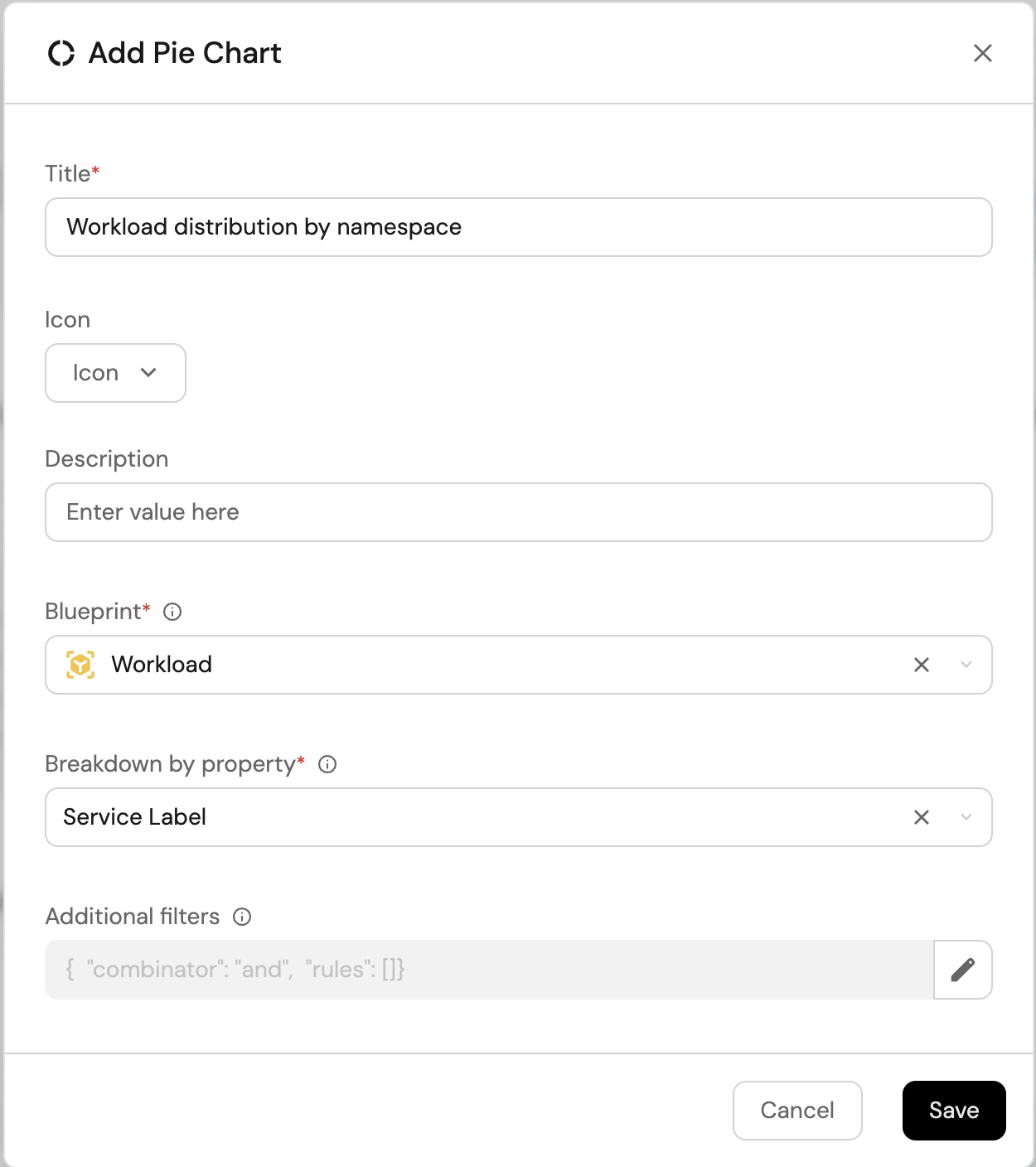

Namespace distribution (click to expand)

-

Click

+ Widgetand select Pie Chart. -

Title:

Workload distribution by namespace. -

Choose an icon (optional).

-

Select Workload as the Blueprint.

-

Choose

Service Labelas the Breakdown by Property. -

Click on

Save

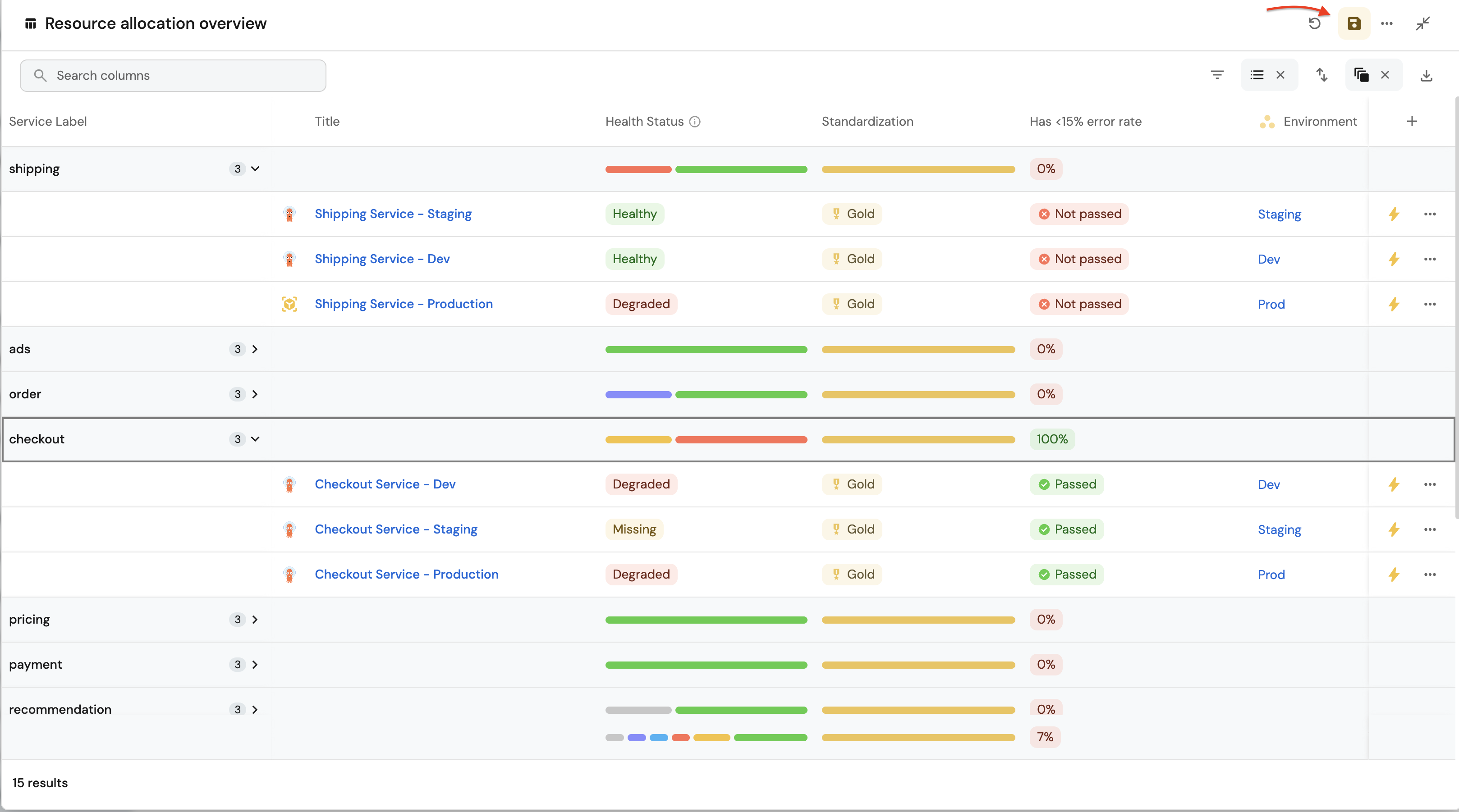

Resource allocation overview(click to expand)

-

Click

+ Widgetand select Table. -

Title:

Resource allocation overview. -

Choose an icon (optional).

-

Select Workload as the Blueprint.

-

Click on

Save. -

Click on the

...on the widget and selectCustomize table. -

Click on the

Group by any Columnicon and select Service Label. -

Click on

Manage propertiesand add the following:- Title

- Health Status

- Standardization

- Environment

-

Click on the

Saveicon.

These widgets will give you a comprehensive view of your Kubernetes runtime, making it easy to monitor service health, resource usage, and deployment status across your cluster.

Possible daily routine integrations

- Send a slack message in the R&D channel to let everyone know that a new deployment was created.

- Notify Devops engineers when a service's availability drops.

- Send a weekly/monthly report to R&D managers displaying the health of services' production runtime.

Conclusion

Kubernetes is a complex environment that requires high-quality observability. Port's Kubernetes integration allows you to easily model and visualize your Kubernetes resources, and integrate them into your daily routine.

Customize your views to display the data that matters to you, grouped or filtered by teams, namespaces, or any other criteria.

With Port, you can seamlessly fit your organization's needs, and create a single source of truth for your Kubernetes resources.

More guides & tutorials will be available soon, in the meantime feel free to reach out with any questions via our community slack or Github project.