Troubleshooting

This page contains answers to common questions and issues that users encounter when using Port, organized by topic.

General

Do I need an expert to set up an internal developer portal with Port?

Answer (click to expand)

Port was designed to let you set up a developer portal in minutes, quickly defining your data model and then ingesting data about software and resources into it.

We believe in "bring your own data model" since each organization differs in how it wants to set up Port and model its software. Our documentation and guides can help you get started.

If you're trying to find out if Port is right for you, you can reach out to us by scheduling an in-person demo, and we’ll be happy to escort you through the process of building a portal that's right for you.

Why not backstage?

Answer (click to expand)

Spotify’s backstage is spot-on in recognizing the need for a streamlined end-to-end development environment. It is also flexible, which lets you build your software catalog according to your data model.

However, it requires coding, personnel to implement it, and domain expertise. You also need to invest in deployment, configuration and updates. You can read a detailed comparison of Port and Backstage here.

Is Port really free?

Answer (click to expand)

Port is free up to 15 users, you can check our pricing page for more information. Using the free version of Port you can set up a modern, fully functioning internal developer portal.

The free version includes all of the features in Port, except for SSO and a certain limitation on the number of software catalog entities (up to 10,000), for reasons of fair use.

In case you're evaluating Port, it provides you with everything you need, and if you need SSO for a given period, contact us.

Can I self-host Port?

Answer (click to expand)

Port is a multi-tenant SaaS product. While there is no option to fully self-host Port, specific elements of Port such as integrations and the Port Agent (which is used to trigger self-service actions and automations) can be self-hosted on your premises.

For enterprises with specific needs due to security, governance and regulation - it is possible to receive a single tenant deployment of Port. To learn more about the single tenant offering, please contact your sales representative.

While not as robust as a self-hosted option, Port does offer security-oriented features such as support for AWS Private Link. To learn more about our Private Link support, click here.

Does Port's web application have session timeouts?

Answer (click to expand)

There is an inactivity timeout period of 3 days, and an absolute auto sign-in period of 7 days.

If you are inactive for 2 days and 23:59 hours, then perform any action, you will stay logged in.

In any case, after 7 days you will need to login again.

Organization

How can I set up SSO for my organization?

Answer (click to expand)

- Set up the application in your SSO dashboard by following the manage your SSO connection documentation.

- Reach out to us with the required credentials in order to complete the set up.

- After completing the set up, Port will provide you with the

CONNECTION_NAME. Head back to the documentation and replace it where needed.

How can I troubleshoot my SSO connection?

Answer (click to expand)

- Make sure the user has permissions to use the application.

- Look at the URL of the error, sometimes they are embedded with the error. For example, look at the following URL:

https://app.getport.io/?error=access_denied&error_description=access_denied%20(User%20is%20not%20assigned%20to%20the%20client%20application.)&state=*********

After the error_description, you can see User%20is%20not%20assigned%20to%20the%20client%20application. In this case, the user is not assigned to the SSO application, and therefore cannot access Port through it.

- Make sure you are using the correct

CONNECTION_NAMEprovided to you by Port, and that the application is set up correctly according to our setup docs.

Why can't I invite another member to my portal?

Answer (click to expand)

When using the free tier, Port allows you to be connected to a single organization. If your colleague is in another organization, you will not be able to invite him/her.

Reach out to us using chat/Slack/support site at support.port.io, and we will help you resolve the issue.

How do I delete my organization?

Answer (click to expand)

To delete your organization, reach out to Port's support team by submitting a request in the support center.

Ocean integrations

Why is my Ocean integration not working?

Answer (click to expand)

If you are facing issues after installing an Ocean integration, follow these steps:

-

Make sure all of the parameters you provided in the installation command are correct.

-

Go to the audit log in your Port application and check for any errors in the creation of your

blueprintsand/orentities. -

In your builder page, make sure that the new

blueprintswere created with the correct properties/relations. -

If you tried to install a

self-hostedintegration, check the integration's documentation to ensure you included the necessary parameters. -

When running self-hosted integrations over TLS, make sure the PEM you mount contains the full certificate chain (service certificate, intermediate certificate(s), and the issuing root) in leaf-to-root order. Missing intermediates cause

CERTIFICATE_VERIFY_FAILEDerrors inside the Ocean container even if local curl commands succeed.How to create a PEM bundleCombine the service (leaf) certificate + intermediate CA certificate(s) + root CA certificate into a single PEM bundle and ensure your endpoints present the chain in order. This avoids

unknown authorityerrors and hostname-mismatch headaches.

If you are still facing issues, reach out to us using chat/Slack/mail to support.port.io, and we will help you resolve the issue.

Does my Ocean integration mapping change when I upgrade to a newer version?

Answer (click to expand)

No, when you upgrade an Ocean integration to a newer version, your existing mapping configuration is preserved and not automatically changed.

The integration will continue using your custom mapping configuration after the upgrade. If you want to adopt new default mappings from the upgraded version, you need to manually update the mapping configuration.

Why am I getting validation errors when starting my Ocean integration?

Answer (click to expand)

Validation errors occur when required configuration parameters are missing or incorrectly formatted. You will typically see errors like this in your logs:

pydantic.error_wrappers.ValidationError: 3 validation errors for Config

jira_host

field required (type=value_error.missing)

atlassian_user_email

field required (type=value_error.missing)

To resolve this:

- Check the integration's documentation for all required parameters.

- Verify that all required environment variables or configuration values are provided.

- Ensure credentials like

PORT_CLIENT_ID,PORT_CLIENT_SECRET, and integration-specific API tokens are correct. - For complex parameters (like GitLab's

tokenMapping), ensure the value is properly formatted as valid JSON or follows the expected structure.

Example of a common formatting error:

pydantic.error_wrappers.ValidationError: 1 validation error for Config

token_mapping

value is not a valid dict (type=type_error.dict)

This indicates the tokenMapping parameter is not properly formatted. Refer to the GitLab token mapping docs for the correct format.

Why is my CI/CD-based Ocean integration failing?

Answer (click to expand)

When running Ocean integrations via CI/CD pipelines (GitHub Actions, GitLab CI, etc.), common issues include:

-

Secrets not configured: Ensure all required secrets (

PORT_CLIENT_ID,PORT_CLIENT_SECRET, integration tokens) are properly configured in your CI/CD platform and passed to the workflow step. -

Workflow syntax errors: Verify your workflow file syntax is correct and the integration step is properly configured.

-

Parameter escaping issues: Some parameters require specific formatting. For example, JSON objects may need to be escaped differently depending on your CI/CD platform.

-

Network restrictions: Some CI/CD runners have restricted network access. Ensure outbound connections to Port's API (

api.port.io) are allowed.

To debug, check the workflow logs for specific error messages and refer to the integration's installation documentation for CI/CD-specific examples.

Why are my entities not being created or mapped correctly?

Answer (click to expand)

If your integration is running but entities are not appearing in your catalog as expected, the issue is likely in your mapping configuration.

Common causes:

-

JQ syntax errors: The mapping uses JQ expressions to extract data. Verify your JQ syntax is correct using a JQ playground.

-

Missing or null identifiers: Each entity requires a unique identifier. If the identifier mapping returns null or empty, the entity will not be created. Check your logs for messages like:

X transformations of batch failed due to empty, null or missing values -

Incorrect property paths: Ensure the JQ paths in your mapping match the actual structure of the source data. If a path doesn't exist, you will see:

Unable to find valid data for: {foo:.bar} (null, missing, or misconfigured) -

Unknown

kind: Make sure you're using a validkindthat the integration supports. Check the integration's documentation for available kinds.

How to debug:

- Go to your Data sources page in Port.

- Select the relevant integration.

- Click on the Event log tab to view detailed logs from the integration, including any mapping errors or warnings.

For help with mapping syntax, see the mapping configuration guide.

Why can't my Ocean integration connect to Port?

Answer (click to expand)

If your integration starts but cannot communicate with Port, it's typically a network configuration issue in your environment.

Common causes:

-

Firewall restrictions: Ensure your network allows outbound HTTPS connections to

api.port.io(EU region) orapi.us.getport.io(US region). -

Proxy configuration: If your environment uses a proxy, configure the integration to use it via the appropriate environment variables (

HTTP_PROXY,HTTPS_PROXY). -

DNS resolution: Verify that your environment can resolve Port's API hostname.

-

TLS/SSL issues: If you're using a corporate proxy or custom certificates, ensure the integration trusts the certificate chain. See the TLS troubleshooting tip above for creating a proper PEM bundle.

These are internal network issues that need to be resolved in your environment. Port's support team cannot directly troubleshoot internal network configurations, but can help verify there are no issues on Port's side.

Actions

After triggering an Action in Port, why is it stuck "in progress" and nothing happens in the Git provider?

Answer (click to expand)

Please make sure that:

- The action backend is set up correctly. This includes the Organization/Group name, repository and workflow file name.

- For Gitlab, make sure the Port execution agent is installed properly. When triggering the action, you can view the logs of the agent to see what URL was triggered.

Catalog

How can I export my catalog data?

Answer (click to expand)

Port allows you to easily export any catalog data in one of the following formats:

- JSON

- Gitops (.yml)

- HCL (.tf)

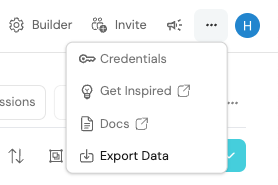

To export your data:

-

In your Port application, click on your profile picture

, and choose

Export Data:

-

Choose one or more blueprints, choose a format and click

Export.

This will download a file with all entities of the selected blueprints in the chosen format.

One of my catalog pages is not displaying all entities, or some data is missing, why is that?

Answer (click to expand)

- Check for table filters in the top right. Make sure no filter is applied, or no property is hidden.

- Sometimes users apply initial filters to increase the loading speed of the catalog page. Make sure your missing entity is not being filtered out.

What can I embed in the iframe widget?

Answer (click to expand)

Iframe is a more limited web environment than a dedicated full-fledged browser tab, and they are a less secure option in general, so not all services support them. For example, Grafana Cloud does not allow iframe embedding of the content, while Grafana self-hosted does support iframe.

To understand if you can embed your desired content, the first step will be to check with the service provider if cross site embedding is supported. After confirming you can embed the relevant content, here are two different approaches for embedding sensitive data:

-

Public page behind a VPN: If the host of the content is self hosted and accessible when users are logged in to your VPN, you can make it publicly accessible to users that are logged in to your VPN. This means that the embedded resource is still secure, because access to your VPN (and therefore, to the resource) is limited only to users with access to the VPN, but it also makes it possible to view the embedded resource in Port, since there is no need for a dedicated authentication flow (again, assuming the user is logged in to the VPN).

For example, with Grafana self-hosted that accessible from your VPN, you can simply make a dashboard public and embed it, and users who have logged in to your VPN will be able to see it embedded in Port correctly.

-

PKCE: Port supports PKCE authentication flow to authenticate the logged in user with an OIDC application against your IdP to gain access. This requires the end service you are trying to embed (for example, a dashboard from self-hosted Grafana) to support OIDC, in order to use the SSO application. In order to set it up, follow the documentation and make sure you do the following:

- Create a new application in your IdP and configure the widget to use the correct application ID.

- Configure the end service with the application credentials, in order to receive the authentication requests.

- Make sure the application is able to get a JWT from the token URL. This is how the application authenticates the user.

Security

What security does Port have in place?

Answer (click to expand)

We put a lot of thought into Port’s design to make it secure. Consequently, it doesn’t store secrets or credentials, and doesn't require whitelisting of IPs.

Port uses industry-standard encryption protocols, data is encrypted both at rest and in transit, with complete isolation between clients and data access logging and auditing.

Port is SOC2 and ISO/IEC 27001:2022 compliant, and undergoes regular pentests, product security and compliance reviews.

You can find the complete coverage of the security policy in the security page.

Port execution agent

Why does the agent fail with a 401 Unauthorized error on startup?

Answer (click to expand)

The agent pod logs show:

ERROR:port_client:Failed to get Port API access token - status: 401,

response: {"ok":false,"error":"invalid_credentials",

"message":"Invalid credentials supplied to the generate token API"}

The agent fails to start and cannot get Kafka credentials.

Cause: The clientId or clientSecret values in the Helm chart are incorrect or empty.

Fix:

- Verify the values in your Helm install command:

helm install my-port-agent port-labs/port-agent \

--set port.clientId="YOUR_CLIENT_ID" \

--set port.clientSecret="YOUR_CLIENT_SECRET" \

--set port.baseUrl="https://api.getport.io" # or api.us.getport.io for US region

- Verify the

baseUrlmatches your Port region. US-hosted orgs must usehttps://api.us.getport.io, nothttps://api.getport.io. - Regenerate your client credentials in Port → Settings → Credentials if unsure whether they are valid.

The baseUrl is the most common mistake for US-region customers. Using the EU URL silently returns 401 for US credentials.

Why is the agent failing with Kafka consumer group authorization errors?

Answer (click to expand)

Agent logs show:

KafkaError{code=GROUP_AUTHORIZATION_FAILED,val=30,

str="FindCoordinator response error: Group authorization failed."}

Cause: The Kafka consumer group ID used by the agent is not authorized for the organization's Kafka topic. This happens when Kafka credentials have not been provisioned for the organization, or when the agent is configured with the wrong organization ID.

Fix:

- Verify your Port organization has Kafka credentials provisioned. Contact Port support to enable Kafka for your org if it was recently created.

- Verify the

port.clientIdandport.clientSecretbelong to the correct organization because credentials are org-specific.

Why does the agent time out during action execution?

Answer (click to expand)

Agent logs show:

ERROR:consumers.kafka_consumer:Failed process message from topic

org_xxxxx.runs, partition 0, offset N:

HTTPSConnectionPool(host='your-backend.example.com', port=443):

Read timed out. (read timeout=30.0)

The action run stays in In Progress indefinitely in Port.

Cause: The backend service (GitHub Actions, GitLab pipeline, webhook endpoint) did not respond within the agent's 30-second read timeout.

Fix:

- Your backend must respond with an HTTP 2xx within 30 seconds of receiving the request. Long-running operations should respond immediately with 200, then report completion asynchronously using Port's run update API.

- Increase the timeout if you control the agent configuration:

--set agent.httpTimeout=60

- Check that your backend host is reachable from the agent pod's network. Corporate VPNs and ACLs frequently block outbound connections from Kubernetes namespaces.

Why does the agent fail with TLS certificate errors on startup?

Answer (click to expand)

The agent fails with SSL or TLS errors when connecting to api.getport.io or to your backend service.

Common causes and fixes:

- Corporate CA not trusted: Mount your corporate CA bundle into the pod and set

SSL_CA_BUNDLE=/path/to/ca-bundle.crtin the environment. - Self-signed cert on backend: Set

SSL_VERIFY=falsein the agent environment for non-production environments only. Never disable SSL verification in production. - EKS with custom networking: Ensure the pod's service account has outbound HTTPS access (port 443) to

api.getport.ioandapi.us.getport.io.

Integration bugs

GitHub

Why are live events enabled but data is stale?

Answer (click to expand)

You enabled Live events in the GitHub Ocean integration, but entities are not updating in real time. The last catalog update timestamp shows hours-old data despite active PR and push activity.

Causes and fixes:

- Webhook not registered: The integration creates a webhook in GitHub on first sync. If the sync failed or was interrupted, the webhook may not exist. Navigate to your GitHub organization settings, open Webhooks, and verify a Port webhook is present. If not, delete and reinstall the integration.

- Rate limit reached: GitHub's webhook delivery can be delayed under rate limiting. Check the integration event log in Port (Data Sources → your integration → Event log) for

rate_limitentries. If present, reduce the number of entity kinds being synced or split the integration across multiple GitHub Apps. - Webhook payload size exceeded: Repositories with large collaborator lists can exceed GitHub's webhook payload size limit. Affected events are silently dropped. Workaround: use a periodic sync interval instead of live events for repos with more than ~500 collaborators.

Data Sources → your GitHub integration → Event log shows every received webhook event. If the list is empty during active repo activity, the webhook is not registered correctly.

Why are entities not deleted after the source is removed in GitHub?

Answer (click to expand)

A GitHub issue, pull request, or repository was closed or deleted in GitHub, but the entity remains in Port with its old status. A full resync does not remove it.

How Ocean deletion works: Port Ocean only deletes an entity during a resync if the entity is absent from the API response for that kind. If your selector query filters out the closed item (for example it no longer matches .state == "open"), Ocean will not delete the entity but it won't appear in the current sync window.

Fix: To have Port delete entities when they are closed or deleted in GitHub, your selector query must include them in the sync scope so Ocean can compare the full set and identify removals:

- kind: issue

selector:

query: "true"

Then set a filter in your blueprint or dashboard to show only open issues, rather than filtering at the selector level.

If you use query: '.state == "open"' in your selector, Ocean will never delete closed issue entities but they simply disappear from the sync window without triggering a deletion.

Why does only a subset of PRs appear in Port?

Answer (click to expand)

Your mapping targets all PRs since a date (selector.query: .created_at >= "2025-01-01T00:00:00Z") but only a small fraction appear in Port.

Cause: The GitHub API returns a maximum of 100 pull requests per repository per sync by default when only open PRs are requested. PRs beyond that page are not fetched.

Fix: Include closed PRs in the selector state list (alongside open) and verify your mapping includes the state property to distinguish open, closed, and merged:

- kind: pull-request

selector:

query: '.created_at >= "2025-01-01T00:00:00Z"'

states: ["open", "closed"]

The states list controls which GitHub PR states are returned. The JQ query filters the objects after they are fetched.

Why are workflow runs being deleted on every sync?

Answer (click to expand)

Workflow run entities disappear from Port on each resync. You want to preserve historical run data but Port keeps removing it.

Cause: The GitHub API only returns the latest 100 workflow runs per repository. On each resync, Ocean compares the current API response to what is in the catalog and deletes anything not in the response - which is all runs beyond the last 100.

Workaround: Add a ttl property to your workflow run blueprint and set it from your mapping. Port will not delete entities whose TTL has not expired, even if they are absent from the sync window:

properties:

ttl: .created_at

Alternatively, use Port's data archival feature to cold-tier old runs rather than deleting them.

Why does mapping fail when a related team does not exist?

Answer (click to expand)

Ingesting a repository entity fails with a validation error when one of the GitHub teams in the .__teams array does not exist as an entity in Port.

Cause: If you map a relation to an array of identifiers and any one of those identifiers does not exist in the catalog, Port rejects the entire entity upsert.

Fix: use try to skip missing teams:

githubTeams: "[.__teams[] | try (.id | tostring)]"

Fix: subtract known missing IDs:

githubTeams: '([.__teams[].id | tostring] - ["3178082", "8446394"])'

Filtering out missing IDs means those relations will be absent on the entity. The entity is still created - the relation will just be empty for the missing teams.

Can I reuse the same GitHub App when migrating from legacy to Ocean?

Answer (click to expand)

Yes, you can use the same GitHub App for both the legacy integration and the new Ocean-based data source during migration. They write to the same blueprints using the same entity identifiers, so entities will not be duplicated.

Safe migration steps:

- Install the GitHub Ocean integration alongside the existing legacy one.

- Verify Ocean is syncing entities correctly (compare entity counts).

- Remove entity kinds from the legacy integration one at a time.

- Once all kinds are migrated, delete the legacy integration.

Do not remove a kind from the legacy integration before Ocean has completed at least one full sync of that kind. If you remove a kind from legacy first, Ocean will delete those entities on its next reconcile pass since they are now owned by Ocean but absent from its scope.

Why does the GitHub integration show syncing but nothing is running?

Answer (click to expand)

The integration status in Data Sources shows a spinning sync indicator but the event log shows no activity and no errors. This persists across browser refreshes.

Cause: This is a known display bug affecting some Ocean integrations where the sync status is not correctly cleared after completion.

Fix: Trigger a manual resync from the Data Sources page. If the integration is self-hosted, restarting the integration pod will also clear the stuck status.

GitLab

Why does resync fail with a missing include_only_active_groups attribute?

Answer (click to expand)

The event log shows:

Failed to execute resync function, error: 'Selector' object has

no attribute 'include_only_active_groups'

All file-kind mappings fail during resync.

Cause: This error has two common causes:

- Invalid selector configuration: The mapping references

include_only_active_groupsbut the selector configuration is malformed or contains unsupported fields for the resource kind being synced. This is the most common cause, even on the latest version. - Outdated integration version: On versions older than 0.5.3, the

include_only_active_groupsattribute is not available in the Selector class.

Fix:

- Verify your selector configuration is valid for each resource kind in your mapping. Ensure that

include_only_active_groupsis only used in supported selector contexts and that there are no typos or extra fields. - Update the integration to the latest version:

- Port-hosted: Data Sources → your GitLab integration → click Update if available.

- Self-hosted: pull the latest image.

- If updating immediately is not possible, remove any reference to

include_only_active_groupsfrom your integration configuration and trigger a manual resync.

Why are projects not visible after setting up a group access token?

Answer (click to expand)

After creating a GitLab group access token and installing the integration, fewer projects appear than expected or none at all.

Cause: Group access tokens have scope-based visibility. A token created without the read_api scope, or created at the wrong group level, will return an empty or partial project list without error.

Fix:

- Verify the token has

read_apiscope (minimum required). - Verify the token is created at the top-level group, not a subgroup, if you want to ingest projects across the full group hierarchy.

- If you only see projects from one subgroup, the token is scoped to that subgroup only.

What are common GitLab integration version compatibility issues?

Answer (click to expand)

When upgrading a self-hosted GitLab integration, breaking changes between minor versions can cause silent failures or incomplete syncs. Always check the integration's changelog before upgrading.

Common version-specific issues:

- v0.6.1: Missing

refparameter causes file-kind mapping failures. Upgrade to v0.6.3 or later. - v0.6.2:

include_only_active_groupsattribute error (see the entry above). - v2 sandbox: Integration may fail in sandbox environments due to stricter SSL verification defaults. Set

SSL_VERIFY=falsein the integration config for non-production environments only.

Azure DevOps

Why is a deleted repository not reflected in Port?

Answer (click to expand)

A repository deleted in Azure DevOps remains as an entity in Port. This affects versions 0.7.44 and 0.7.5 of the ADO integration.

Cause: A bug in those versions caused the reconcile phase to skip repository deletion events. Other entity kinds (branches, pull requests) handle deletion correctly.

Fix: Update the ADO integration to the latest version via Data Sources → your ADO integration → check for updates. If you are self-hosted, pull the latest image tag.

Why does the Azure DevOps sync appear to run but nothing happens?

Answer (click to expand)

The Data Sources page shows a sync running (spinner or timestamp updating), but no entities are created, updated, or deleted. The event log shows no errors.

Cause: This typically happens when the integration's service hooks in Azure DevOps were not created correctly, or when the integration pod restarted mid-sync and the previous run's state was not cleared.

Diagnostic steps:

- Navigate to your Azure DevOps project → Project Settings → Service hooks. Verify Port webhooks exist. If not, the integration is not receiving events.

- Check the integration event log in Port for

abortorresync_abortedentries - these indicate the sync was interrupted. - Trigger a manual full resync from Data Sources.

PAT token and service hook setup: If service hooks fail to create when installing the integration, verify your Personal Access Token has the following scopes: Code (Read), Build (Read), Release (Read), Work Items (Read), Service Hooks (Read & Write). The Service Hooks scope is required for the integration to register webhooks automatically.

Why are tests not ingesting despite having many builds?

Answer (click to expand)

You have a large number of builds but only a fraction of test results are ingested, with very few builds matched to tests.

Cause: The ADO integration paginates test results per build, but large build histories can exceed the default page limit before all tests are fetched. The integration logs no errors in this case.

Fix:

- Reduce the build history window in your selector:

- kind: test-run

selector:

query: '.createdDate >= (now - 7776000 | todate)'

The example above limits ingestion to roughly the last 90 days.

- Alternatively, filter to specific build definitions rather than ingesting all builds.

Why is file ingestion inconsistent across files in the same repository?

Answer (click to expand)

Some files are synced correctly but others (for example service-catalog.yml) are skipped or show a perpetual syncing state.

Cause: Large files or files near the ADO API response size limit can trigger buffer overflow errors that are not surfaced in the Port event log.

Fix:

- Check the integration pod logs directly for

buffer limitortimeouterrors. - Add a size filter to your file selector to exclude files above a certain threshold.

- If using self-hosted, increase the pod's memory limit.

API

To troubleshoot requests to Port's API, refer to the API troubleshooting section.